AI Readiness Assessment: A Free 6-Point Scorecard

Last updated: April 2026

A real AI readiness assessment takes about four hours. If a consultancy is quoting you eight weeks and $200K, what they’re selling isn’t an assessment. It’s a billable engagement dressed up as a diagnostic.

We’ve run this exercise inside Fortune 500 companies and inside scrappy mid-market teams who built our scorecard themselves over a Tuesday. The mechanics are the same either way. There are six dimensions worth measuring, each one scored on a four-point scale, and a clear set of next steps based on where you land. That’s the whole thing.

This piece gives you the scorecard, the rubric, and what to do at each score. If you’re trying to figure out whether your organization is ready to move from AI experiments to AI in production, you can stop reading after this article and start scoring. We’ll point you to additional resources at the end if you want them.

What an AI readiness assessment actually measures

An AI readiness assessment measures the gap between the AI use cases you want to ship and the organizational, technical, and operational conditions required to ship them. It’s not a quiz about whether you’ve heard of vector databases. It’s a structured look at six dimensions: data, platforms, skills, use cases, governance, and economics.

A useful assessment does three things. First, it produces a score that’s diagnostic, not aspirational, meaning the questions are specific enough that you can’t accidentally rate yourself a 4 because you’re optimistic. Second, it points to actions, not just gaps, so you finish the exercise knowing what to do this quarter. Third, it works without consultants, because the people who live in your systems every day know more about your readiness than anyone you’d hire.

The reason most readiness assessments cost $200K is not because the diagnostic itself is hard. It’s because the consultancy is bundling the diagnostic with strategy work, change management, and a 200-page deliverable nobody reads. The diagnostic is four hours of work. The rest is consulting upsell.

The six dimensions that determine AI readiness

After running a lot of these, six dimensions consistently separate organizations that ship AI from organizations stuck in pilot purgatory. They are:

1. Data. Is your data accessible, trustworthy, and shaped for the questions AI will ask? This is the dimension we see fail most often. Pilots succeed on hand-curated data. Production systems fail on the same data nobody had to clean by hand.

2. Platforms. Do the platforms your business runs on (CRM, ERP, support, finance) expose the right APIs and identity model for AI agents to act on them? Most enterprise platforms do, in 2026. The question is whether yours are configured to use those capabilities or stuck on a 2019 implementation.

3. Skills. Do you have engineers, product people, and operators who can build, ship, and maintain AI systems? Or does every AI conversation route to one overloaded ML engineer who’s also doing data engineering, MLOps, and prompt design at the same time?

4. Use cases. Have you identified specific, scoped, measurable AI use cases with named owners and clear success metrics? Or do you have a slide that says “AI for everything” and a Slack channel full of ideas?

5. Governance. Do you have policies in place for what data AI can access, what actions it can take, and how to audit what it did? Without this, your legal and security teams will block production deployment, and they should.

6. Economics. Do you understand the unit economics of the AI workloads you’re considering? Token costs, inference costs, the cost of being wrong? AI projects that ignore economics tend to ship and then quietly get turned off six months later.

These six dimensions interact. A team strong on skills but weak on data will build elegant pipelines that produce wrong answers. A team strong on use cases but weak on platforms will identify perfect AI applications that can’t actually integrate with the systems they’re supposed to enhance.

The scorecard: scoring rubric and what each score means

For each dimension, score yourself 1 to 4. Be honest. The point of this exercise is to surface gaps, not to feel good.

| Dimension | 1 (Not ready) | 2 (Building) | 3 (Operational) | 4 (Strong) |

|---|---|---|---|---|

| Data | Data lives in 10+ systems, no canonical definitions, quality varies. | Warehouse exists, some curated marts, but agents still hit raw tables. | Curated semantic layer, governed access, agents query a clean surface. | Real-time, governed, with feature definitions and lineage. |

| Platforms | Core systems are heavily customized, APIs limited or undocumented. | Modern platforms in place, APIs exist, but identity model is fragmented. | Platforms expose AI-ready capabilities (Agentforce, Copilot tier, etc.) and they’re configured. | Platforms integrated through a unified identity and orchestration layer. |

| Skills | One ML engineer, no product or ops support, AI is a side project. | A small AI team, but data engineering and MLOps are bottlenecks. | Cross-functional pod (eng, product, ops, data) with senior leadership. | Multiple shipped AI products in production, dedicated platform team. |

| Use cases | “AI for everything,” no scoped initiatives, no owners. | A few pilots running, success metrics unclear. | 2-3 use cases with named owners, scoped scope, and measurable goals. | Use case roadmap with prioritization framework, kill criteria, and quarterly reviews. |

| Governance | No AI-specific policies, security/legal involved late or not at all. | Draft policies exist, but no enforcement mechanism. | Access controls and audit logging operational, security/legal aligned. | Governance integrated into the platform; agents inherit user permissions automatically. |

| Economics | No unit cost model for AI workloads. | Some cost tracking, but per-feature ROI not measured. | Per-workload cost models, ROI tracked monthly. | Cost-per-task economics drive use case selection and model routing. |

Add the six scores. The total falls between 6 and 24, which maps to four readiness levels.

Reading your score: four readiness levels and what to do

Where you land determines what to focus on next. Most enterprise teams we work with score between 12 and 16 at the start of an engagement. That’s not a bad starting point. It’s a productive one.

6 to 11: Foundation. You’re not ready to ship AI in production, and that’s fine. The work is upstream. Focus on the lowest-scoring dimensions, almost always data and governance. Don’t start an AI roadmap until at least three of the six dimensions are scoring 2 or higher. Trying to ship AI from this score is how organizations lose six figures on pilots that quietly die.

12 to 17: Pilot-ready. You can run focused pilots that prove specific use cases. The trap at this level is mistaking pilot success for production readiness. Pilots succeed on conditions (curated data, controlled use, lots of human oversight) that don’t survive scaling. Use pilots to learn, not to commit.

18 to 21: Production-ready. You can ship AI in production for scoped use cases. Most teams at this level can run two to three AI systems in production simultaneously. Beyond that, the constraint becomes the platform team, not the technology.

22 to 24: Scale-ready. You’re operating AI as a capability, not a project. The work shifts from “can we ship it” to “how do we govern, optimize, and improve a portfolio of AI systems.” Most enterprises won’t be here in 2026. The few that are tend to have been investing in data infrastructure since 2019 or earlier.

If you’re scoring lower than you expected, the right response is not “we need to hire a consultancy to fix this.” It’s “which one or two dimensions, if we improved them by one point, would unlock the most?” That’s almost always the most valuable single question this assessment surfaces.

When to bring in outside help (and when not to)

Here’s our honest take, including on ourselves.

You probably don’t need outside help if your score is below 12. The work is foundational, and outside firms can’t accelerate it the way they can accelerate technical builds. Hire data engineers. Set up a small governance committee. Pick one platform to clean up. The companies that fix this themselves end up with better long-term capability than the ones that pay someone else to do it.

You might benefit from outside help in the 12-to-17 range if you’re trying to compress a 12-month learning curve into 6 months on specific skills like agentic AI architecture, where shipped experience matters and most teams are still figuring out what works. The right model isn’t a 50-person consulting team. It’s a small senior team that pairs with yours and leaves the playbooks behind. We’ve written more about why we built Cabin around this model and what a clean consultant exit timeline looks like, if you’re evaluating partners.

You should be careful about outside help if your score is 18 or higher. At that point, your team knows more about your specific stack than any consultant can in the time they’re with you. The right outside engagement at this level is targeted: a specific build, a specific platform integration, a specific capability gap. Not strategy. Not transformation. Not change management.

The pattern that works: keep the strategy in-house, bring outside help for execution on capabilities you don’t have yet, and require knowledge transfer as part of the engagement. Anything else creates the consultant dependency you were trying to avoid.

Frequently asked questions

How long does an AI readiness assessment take?

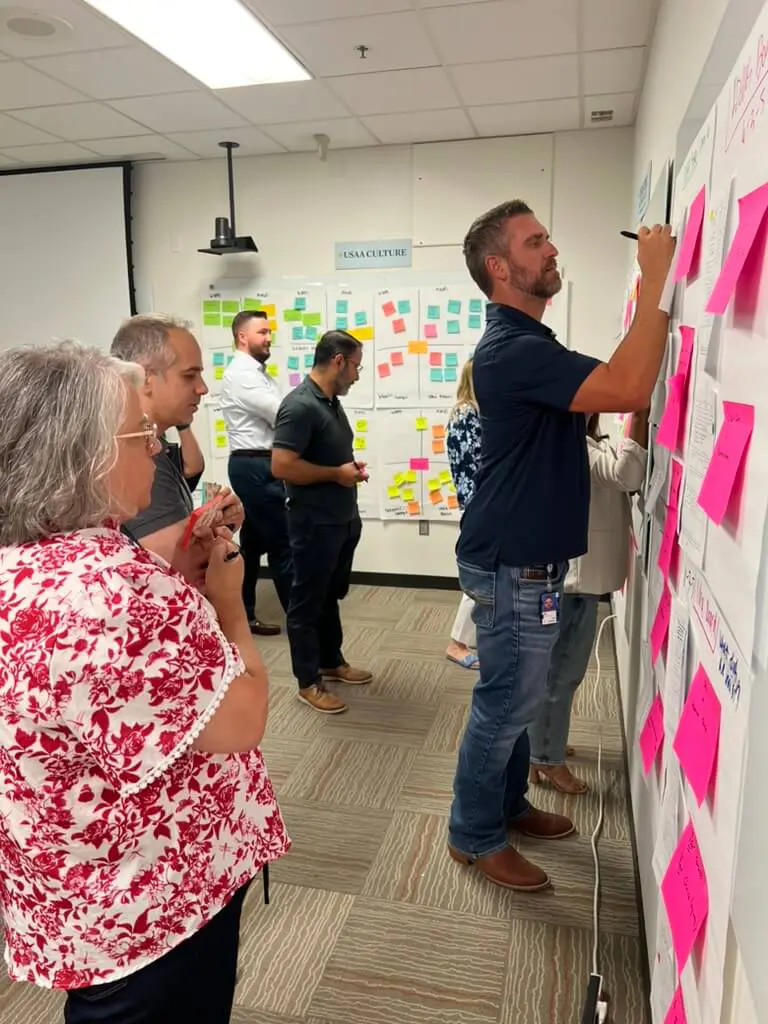

A focused, internal assessment takes about four hours of working time spread across two to three meetings: one to align on the scoring rubric, one to score the dimensions with the right people in the room, and one to translate the scores into next steps. Bigger consultancies stretch this into 6-8 weeks because that’s the engagement model their pricing requires.

Who should be in the room for a readiness assessment?

Five to seven people: a data leader, an engineering leader, a product leader, an ops or business leader who owns a target use case, someone from security or legal, and an executive sponsor. Smaller groups miss dimensions. Larger groups stop being honest.

What’s the difference between AI readiness and AI maturity?

Readiness is about whether you’re prepared to ship a specific class of AI systems today. Maturity is about how operationalized your AI capability is over time. Readiness is the input. Maturity is the output. A team can be ready and not yet mature. The reverse is rare.

Should we score ourselves or hire someone to score us?

Score yourselves. Outside scoring is mostly theater because the people who actually know your systems are the ones inside them. The value of an outside perspective is in interpretation: “given your scores, what are other teams in your situation doing that’s working?” Not in the scoring itself.

If you score the six dimensions honestly and act on the lowest two, you’ll be in a different place in 90 days than you are today. That’s the whole point of doing this exercise. Not to produce a report. To produce a decision.

If you want a second set of eyes after you’ve scored yourselves, we’re happy to walk through it with you. The conversation we have is short, direct, and free, and you’ll leave knowing whether outside help would actually help.