AI Transition: Why Most Organizations Get It Wrong

Last updated: March 2026

Most AI transitions don’t fail at the model layer. They fail the first time the consultants leave and the team realizes they can’t touch what was built without breaking something.

That’s not a technology problem. It’s a capability problem. And it’s the one thing nobody selling AI transition work wants to put in their pitch deck.

Gartner data shows only 48% of AI projects ever make it into production. More telling: BCG found that just 26% of companies have built the internal capabilities to move beyond proof-of-concept and generate real value at scale. The other 74% got something built. They just can’t own it.

Enterprise leaders are under real pressure right now. Boards want AI. Roadmaps have shifted. Budgets have been reallocated. But after 40+ enterprise products shipped, the pattern is consistent: organizations race to implement AI without building the organizational muscle to own what gets built. The model ships. The workflow goes live. The consultants exit. Six months later, the system is sitting exactly where they left it, because nobody inside knows how to take it further.

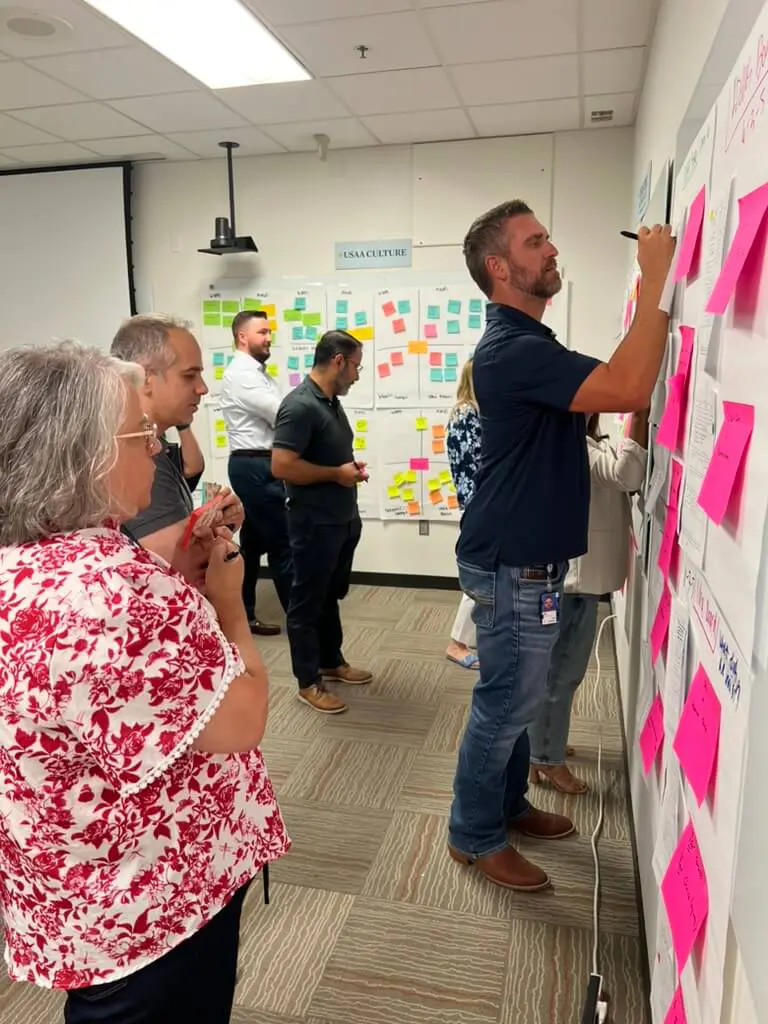

We’re seeing this from the inside right now. Three weeks into a current financial services engagement, the client’s engineers are writing prompts alongside ours. Claude Code is doing work that would have gone to a design tool six months ago. The build is faster because the team is in it, not watching it.

This article breaks down what an AI transition actually requires, where it stalls, and what it looks like when it’s done right.

What Is an AI Transition, Really?

An AI transition is the process by which an organization restructures its products, workflows, and internal capabilities to make artificial intelligence foundational, not a feature added on top. It differs from AI implementation in that success is measured by what the organization can do independently after the engagement ends, not whether the system went live on schedule.

That distinction matters more than most organizations realize at the start. Vendors and consultancies tend to frame an AI transition as a delivery problem: scope it, sprint on it, ship it. But the organizations that come out ahead treat it as a capability-building problem with a technology component. The technology is the mechanism. The capability is the outcome.

In regulated industries, this is especially true. In financial services, existing model risk frameworks from banking regulators already require documentation, validation, and governance for AI systems, including explainability and audit trails on AI-driven decisions. In healthcare, new FDA and HHS guidance for AI-enabled products requires that organizations be able to document how their systems behave and monitor them over time. You’re not just building something. You’re building something a compliance team can audit, engineers can maintain, and a product team can take further without re-engaging an outside firm every time requirements change.

AI Implementation vs. AI Transformation: What’s the Difference?

The terms get used interchangeably. They’re not the same thing, and the distinction is what separates organizations that own their AI capability from those that rent it indefinitely.

Implementation is about the deliverable. Transformation is about what the organization can do after the deliverable ships.

| Dimension | AI Implementation | AI Transformation |

| Primary deliverable | A working system or feature | Internal capability to build and extend systems |

| Measure of success | Go-live | Team autonomy by quarter end |

| Knowledge ownership | Stays with the vendor | Transferred to internal team |

| What gets left behind | A product | A playbook, a component library, and a trained team |

| Consultant role | Builders | Builders and teachers |

| Risk if consultants exit | High — team can’t take it further | Low — team runs the playbook |

| Time horizon | Sprint to launch | Launch plus capability ramp |

| Common failure mode | Works at handoff, stalls at month three | Rarely fails because the team owns it |

Most engagements sold as “AI transition work” are implementation with capability-transfer language in the proposal. The tell: ask what your team will be able to do independently at month three. If the answer is vague, you’re buying implementation, and you’ll be paying for the next round before the year is out.

The Three Phases Where AI Transitions Actually Stall

This is the failure pattern as it actually plays out. It’s not one thing; it’s a sequence. Most organizations hit at least one of these phases. Some hit all three.

Phase 1: The model is built, but the team can’t take it further

An AI system goes live. A document processing workflow, an intelligent triage tool, an agentic routing system for customer service. It works. For a while.

Then a new requirement comes in. Or the model provider pushes an update that changes behavior. Or the compliance team wants to audit the decision logic. Suddenly there’s nobody internal who can answer the questions, let alone make the changes.

Major LLM providers ship new models and retire old endpoints frequently enough that serious platforms now treat model updates as an ongoing integration risk. Integration vendors describe maintaining dedicated compatibility layers and updating connectors essentially at launch for each new major model. That’s the invisible work internal teams consistently underestimate when they take on a system built by someone else.

The underlying issue isn’t technical. The build happened to the team, not with them. The consultancy shipped something technically sound and structurally opaque. Internal engineers weren’t in the design reviews. The product team doesn’t understand how the prompt architecture works. The system is a black box the org depends on and can’t safely touch.

Documentation doesn’t fix this. It covers what was built, rarely why. The only thing that transfers judgment is working alongside the people building, not receiving a handoff from them at the end.

Phase 2: The workflow is designed, but adoption never lands

This one is more common in enterprise AI rollouts than anyone wants to admit. An intelligent workflow gets built, something that should genuinely accelerate work for the people using it. Adoption is fine. Partial. Inconsistent.

The UX wasn’t designed for how AI actually behaves. AI-powered workflows require a different interaction model than traditional software. Users need to understand what the system is confident about, where it needs human judgment, and what happens when they override it. When that context is missing, users default to their old workflow. The AI sits there looking impressive in the demo and unused in production.

This is why UX for AI is a separate discipline, not a skin on top of standard product design. The interface has to make the AI’s reasoning legible, or adoption stalls regardless of how good the underlying model is.

Phase 3: The consultants leave and the tribal knowledge goes with them

This is the most painful phase because it usually doesn’t surface until months after the engagement ends. The team that built the system knew why certain architectural decisions were made, which edge cases to watch for, how the guardrails were calibrated. That team is gone now. The documentation covers what was built. It rarely explains why.

What’s left is a capable system and an internal team that’s hesitant to touch it. Not because they’re not capable, because they don’t have the context to make confident decisions. In financial services and healthcare, that hesitancy is entirely rational. One wrong change to an LLM integration in a healthcare workflow isn’t a bug to patch. It’s a potential compliance event.

A 2025 MIT report found that 95% of enterprise generative AI implementations were falling short, and attributed the root cause not to model quality but to the “learning gap” for both tools and organizations, particularly flawed enterprise integration and misaligned resource allocation. BCG’s 2024 research is even more direct: it recommends that roughly two-thirds of effort in AI transitions go toward people-related capabilities rather than technology, arguing that’s the real bottleneck.

The organizations that avoid this phase treat knowledge transfer as a first-order deliverable, not a wrap-up session on the last day of the engagement.

What Organizational Readiness for AI Actually Looks Like

Before committing budget and timeline to an AI transition, it’s worth being honest about where the organization actually is. Not where leadership wants it to be.

These are the indicators worth pressure-testing before scoping an engagement:

Technical readiness

- [ ] Internal engineers can explain how your current systems handle data at the API layer

- [ ] At least one engineer has worked with LLM APIs in a production context

- [ ] Data infrastructure can support model inputs without a major pre-project overhaul

- [ ] Your team has a working understanding of compliance obligations around AI outputs

Organizational readiness

- [ ] There’s a named internal owner for the AI system, not just a project sponsor

- [ ] That owner has the authority to make product decisions without a six-week approval cycle

- [ ] Engineers have capacity to pair during the build, not just receive a handoff

- [ ] Leadership has aligned on what “done” looks like before the engagement starts

Culture readiness

- [ ] Teams are willing to change workflows, not just add a new tool on top of existing ones

- [ ] There’s tolerance for iteration — the first version won’t be the final version

- [ ] Failure is treated as data, not a reason to kill the initiative

Checking fewer than half these boxes means the transition will be harder than the technology makes it look. That’s not a reason to wait. It’s a reason to build the readiness work into the engagement scope rather than assuming it’ll sort itself out.

For more on building the team capability that makes transitions hold, see how we approach team capability building that actually carries forward and our framework for consultant exit strategy and capability transfer timelines.

What Capability Transfer Looks Like in Practice

Abstract capability transfer sounds good in every proposal. Here’s what it looks like when it’s actually happening.

Week one, the client’s engineers are in the design reviews. Not observing — asking questions, pushing back on architectural decisions, understanding the tradeoffs being made. By week three, they know why the orchestration layer is structured the way it is. By week six, they’re writing prompts alongside the build team, not waiting for them to be handed over.

The artifacts matter, but not in the way most firms think about them. Not documentation for its own sake. Artifacts that encode judgment: a prompting playbook that explains not just what prompts are used but why they’re structured that way; a component library annotated well enough that a new engineer can take it further six months from now without tribal knowledge; an adoption dashboard that makes it visible when the system is behaving as expected and when it needs attention.

By month three, the client’s team is running sprint reviews, making architectural calls, and escalating only when something genuinely novel comes up. That’s the target state. Not dependency. Autonomy.

In financial services, where the majority of Cabin’s work happens, this looks like: a compliance team that can audit the AI logic without needing the original architects in the room. An engineering team that can update the model integration when the provider pushes a new version. A product team that can extend the intelligent workflow to a new business line without re-scoping the engagement.

The organizations that get here share one thing: they insisted on pairing from day one. Not a kickoff session, not a handoff meeting. Their engineers in every design review, every architectural decision, from week one.

For more on how this works structurally, see how we address the structural traps that create consultant dependency and what human-centered AI consulting looks like when the goal is systems people actually use.

How to Know When Your AI Transition Is Done

Not at go-live. Go-live is a milestone, not a finish line.

A transition is done when your team can do three things without outside help: operate the system day-to-day, take it further when requirements change, and explain it to a compliance auditor. Go-live gets you the first one. The second and third take longer, but they’re what determine whether the transition actually held.

The concrete signal: the client’s team is making architectural decisions they would have escalated three months ago. Not because the decisions got easier — because the team got more capable.

The external signal is simpler. When a new business requirement comes in and the first conversation doesn’t start with “can we get the consultants back for this?” it starts with “here’s how we’d approach it.” That’s the moment.

The data backs up how rare that moment is. Gartner reports only 48% of AI projects make it to production, with organizational capability gaps, integration issues, and data problems cited more often than model performance as the reasons for failure. AvePoint’s 2025 enterprise survey found that AI deployment delays average close to a year for three-quarters of organizations, and that most continue to rely on external support well past launch because internal capabilities weren’t built in parallel.

BCG puts it plainly: only about a quarter of companies have developed the internal capabilities to scale AI independently. The other three-quarters built something. They just don’t own it yet.

The answer isn’t to lower ambition. It’s to build differently, treating capability transfer as the primary deliverable rather than the final slide in the deck.

If you’re evaluating where your organization sits in this process, our digital transformation consulting page and consultant exit strategy framework are good starting points.

Frequently Asked Questions

What is the difference between an AI transition and AI implementation?

AI implementation refers to building and deploying a specific AI system or feature. The deliverable is a working product. An AI transition is broader: it’s the process of building the internal organizational capability to own, operate, and take AI systems further independently. Implementation is a component of a transition, but a transition isn’t complete until the team can operate without the original build team.

How long does an AI transition typically take?

A realistic structure: working prototype in the first few weeks, initial capability transfer by month two, team autonomy by months three to four. Full independence — where the internal team is confidently making architectural decisions without escalation — typically takes one to two quarters, depending on technical depth and how aggressively capability transfer is built into the engagement from the start.

What’s the biggest reason AI transitions fail?

Organizational capability gaps, not model quality. MIT’s 2025 research found 95% of enterprise generative AI implementations were falling short primarily because of integration and learning gaps, not algorithms. BCG’s 2024 data reinforces this: firms that succeed invest roughly two-thirds of their effort on people-related capabilities, not technology. The most common specific failure mode is treating capability transfer as a handoff at the end rather than a parallel track throughout.

The organizations getting AI right aren’t the ones with the biggest budgets or the most sophisticated models. They’re the ones that insisted on owning what they built, and found partners willing to teach while they shipped.

If you’re mid-transition and hitting the stall points above, or evaluating how to structure an engagement that leaves your team stronger at the end of it, we’re worth talking to. First working prototype ships in weeks. Team autonomy hits by quarter end. The playbook stays when we go.

About the Author

Cabin is an AI transformation consultancy that architects AI-native products, implements intelligent systems, and builds client team capability while doing it. Founded by the core team behind Skookum, which became Method under GlobalLogic and rolled up to Hitachi, Cabin’s partners have shipped 40+ enterprise products together over nearly 20 years, for clients including FICO, American Airlines, First Horizon, Mastercard, Trane Technologies, and SageSure.

Design system implementation is where Cabin operates every day, not as an advisor watching from the sidelines, but as the senior designers, engineers, and strategists doing the work. The team has built and rescued design systems across financial services, healthcare, and insurance — embedding with client teams, not above them, so the capability stays when the engagement ends.

Everything Cabin publishes on design systems, DesignOps, and team enablement comes from work currently in progress, not from research reports or conference decks. When we write about why design systems fail, it’s because we’ve inherited the aftermath. When we write about governance that works at scale, it’s because we’ve built the playbooks.

![Salesforce Implementation Checklist: Complete Guide [2026]](https://cabinco.com/wp-content/smush-webp/2025/11/DSC09010-1400x933.jpg.webp)

![Consultant Exit Strategy That Actually Works [With Timeline]](https://cabinco.com/wp-content/smush-webp/2026/01/2E7CFF24-FC73-4F12-ADB0-E012D0426CF4_1_105_c.jpeg.webp)