Enterprise AI Capability Building: Why It’s the Real Outcome of an AI Engagement

Last updated: May 2026

Most enterprise AI engagements end with a working system and a team that can’t extend it. The vendor leaves. The Slack channel goes quiet. Six months later, when the model needs retraining or the use case needs to evolve, someone in the organization has to decide whether to call the vendor back or start over. Both options cost money. One of them costs trust.

Enterprise AI capability building is the alternative: a deliberate engagement model where the goal isn’t a deployed system, it’s an organization that can deploy, govern, and extend AI systems without the consultancy. The deployed system is the byproduct. Capability is the outcome.

That distinction sounds semantic. It isn’t. It changes which people are in the room, how the work is sequenced, what artifacts get produced, and how success is measured. This piece covers what enterprise AI capability building actually means in practice, why most consulting engagements don’t produce it, and what to look for if you want capability instead of just delivery.

What enterprise AI capability building actually means

Enterprise AI capability building is the process of developing the people, processes, and architectural knowledge inside your organization that are required to deploy, operate, govern, and extend AI systems without ongoing vendor dependency. It’s not training. It’s not change management. It’s the deliberate transfer of practitioner-level skill while production work is happening.

The shorthand most consultancies use is “enablement,” which usually means a few workshops, some documentation, and a handoff meeting at the end of the project. That’s not capability building. That’s a deliverable wrapped in training language.

The reason this distinction matters now, more than it did during the cloud or mobile transitions, is that AI systems decay. A web app shipped in 2018 still works in 2026. A model deployed in 2024 has likely been retrained, had its prompts rewritten three times, and absorbed a new safety review. Without internal capability, every one of those events becomes a vendor dependency or a stalled use case. Capability building is the part of an enterprise AI strategy that determines whether your team can keep the system alive after the vendor leaves.

Why most AI engagements skip capability building

The honest answer is that capability building is harder to sell, harder to deliver, and harder to bill against than pure delivery work. Three structural incentives push consultancies toward delivery-only engagements.

The first is staffing economics. Building capability requires senior practitioners pairing directly with client engineers. That’s expensive talent on the bench longer than a delivery model needs. Most large consultancies pyramid their staffing: a senior partner sells the work, a few mid-level managers run it, and a fleet of junior consultants do the implementation. Junior consultants can’t transfer capability they don’t have.

The second is the comfort of the deliverable. A working AI system is a thing that can be demoed, signed off on, and invoiced against. Capability is harder to demo. It looks like the absence of vendor dependency, which is the opposite of what most billing models reward. Consultancies that profit from change orders and extension contracts have an active disincentive to leave teams capable.

The third is that clients don’t always ask for it. Procurement evaluates proposals on price, timeline, and deliverable specifications. “How will my team be different at the end?” is rarely a scoring criterion, even though it’s the question that determines whether the engagement was worth the spend.

Cabin’s stance on this is direct: an AI engagement that produces a working system but leaves your team unable to extend it has failed, even if the deliverable shipped on time. The model is the easy part. The team that can run the model in production for the next three years is the actual asset. That’s especially true in regulated industries, where the moment a use case meets a model risk review or an unexpected production output is also the moment a vendor-dependent team discovers it doesn’t have the answers a regulator expects.

The four capabilities your team needs to own

Most enablement programs treat AI capability as a single skill: “AI literacy” or “prompt engineering.” It isn’t. Production AI systems require four distinct capabilities, and a real capability-building engagement maps to all four. Skip any one and the team has gaps that surface the first time something breaks.

Here’s the breakdown, with what your team should be able to do at handoff:

| Capability | What your team owns | How to test it |

|---|---|---|

| Architecture | Decisions about model selection, retrieval design, agent orchestration, and integration patterns. The system’s structural logic. | Can your engineers explain why the system uses model X over Y, and what they’d change if requirements shifted? |

| Evaluation | The test suite that checks whether the system is working: ground truth datasets, regression tests, drift monitoring, human review loops. | Can your team run an eval against a new model version without help? Can they explain what each metric measures and why? |

| Operations | Runbooks for incidents, model updates, prompt changes, and rollback. The boring work that keeps systems alive. | When the model produces an unexpected output in production, does your team know who responds, in what order, with what authority? |

| Governance | Decision rights, audit trails, risk reviews, and compliance posture. Especially load-bearing in financial services and healthcare. | Can your team answer the regulator’s questions about how the system makes decisions, who approves changes, and what happens when something goes wrong? |

(Caveat: this table is a starting frame, not a maturity model. The boundaries between these capabilities blur in real engagements, especially Operations and Governance in regulated industries. Use it to find your gaps, not to grade them.)

Architecture is the one most engagements try to teach. The other three are usually skipped, deferred, or replaced with documentation that nobody reads.

The evaluation gap is the most expensive. Without an evaluation suite your team owns and runs, you can’t safely upgrade a model, swap a vendor, or add a use case. Every change becomes a wait-for-the-vendor moment. We’ve seen organizations stuck on outdated models for 18+ months because the original vendor wrote a custom eval that nobody else can interpret.

The governance gap is the most dangerous. In regulated industries, “the vendor said it was fine” is not a defensible audit answer. Your team needs to be able to explain decisions the system made, on its own, to a regulator or an internal risk committee. That capability has to be built deliberately. It does not transfer through documentation alone, though a real knowledge transfer checklist does name the artifacts, decisions, and operational rituals that have to move from vendor to team.

Naming the four capabilities is the easy part. The harder question is how team capability building actually gets structured into the work, week over week, so the team is operating the system by the time the vendor leaves.

How capability transfer gets structured into the work

Capability transfer that actually sticks is built into the engagement structure from week one, not bolted on at the end. Here’s the framework Cabin uses, called the Architect-Pair-Own (APO) model. It’s the structural answer to “how does capability move from us to you?”

The APO model has three overlapping phases that run across a typical 12-week engagement. Each phase has a different ratio of vendor-to-client involvement, and a specific artifact produced at the end.

Phase 1: Architect (weeks 1-4), vendor-led, client-shadowing

Cabin engineers and architects make the foundational decisions: model selection, retrieval architecture, evaluation strategy, integration approach. Client engineers shadow every decision review, with all reasoning documented in architecture decision records (ADRs). Outcome: working prototype plus an ADR repository the client owns.

Phase 2: Pair (weeks 5-8), co-led, equal authority

Cabin engineers and client engineers co-author every change to the system. Pull requests require both a Cabin reviewer and a client reviewer. Client engineers run their first independent eval cycle with Cabin observing. Outcome: production-ready system plus an evaluation suite the client can operate.

Phase 3: Own (weeks 9-12), client-led, vendor on standby

Client engineers drive all changes; Cabin reviews and advises but does not implement. Client team handles the first incident response on their own. Governance runbooks tested against real scenarios with the client’s risk team. Outcome: client team running the system in production without daily Cabin involvement.

The reason this works where workshops and end-of-project training don’t: the capability builds through reps on real production work, not through abstract exercises. By the time week 12 hits, the client team has already done the work three times in three different contexts. The handoff isn’t a moment, it’s the natural endpoint of how the engagement was run.

We don’t claim this is the only model that works. We claim that any model without these three properties (vendor shadowing becomes pairing becomes vendor on standby) produces a delivery, not a capability.

Phase 3 is also where most engagements get tested in ways the brief didn’t anticipate. The system behaves correctly in 95% of cases and strangely in the other 5%. A regulator asks a question nobody scoped the answer for. An edge case surfaces that requires changing a prompt, a retrieval rule, and a governance approval at the same time. The point of Phase 3 is for the client team to handle these moments while the vendor is still in the room (advising, not implementing) so that nothing about the next moment requires a phone call back.

What to ask vendors before you sign

If you’re evaluating an AI consulting engagement and capability transfer matters to you, six questions tell you almost everything you need to know about whether the vendor can actually deliver it. Most pitch decks won’t answer them. The answers you want are specific.

- Who specifically will pair with my engineers, and how senior are they? If the answer is junior consultants or “a flexible team,” capability transfer will not happen. Senior practitioners must do the pairing.

- What artifacts will my team own at handoff? A real answer names them: ADRs, evaluation suite, runbooks, prompt libraries, governance documentation. A vague answer (“full documentation”) means there isn’t a plan.

- Will my team run an evaluation cycle independently before you leave? If no, the eval gap will surface six months in. If yes, ask how they’ll know your team can do it.

- How will we handle the first production incident? If the vendor’s plan is to lead the response, your team won’t learn it. If the plan is to coach your team through it while it happens, you’ll have an on-call team that’s done it once.

- Who will own model updates after you leave? “We can come back” is a dependency answer. “Your team will own the eval suite and the update playbook” is a capability answer.

- What does the engagement look like if my team gets capable faster than expected? A vendor with the right incentives will be willing to scope down. A vendor with the wrong incentives will resist.

The answers don’t have to be perfect. They have to be specific. A consultancy that has actually done capability transfer can answer all six in concrete terms. A consultancy that hasn’t will hedge. The vendor’s consultant exit strategy (when it starts, what it transfers, and how it ends) should already be on the table by question two.

Frequently asked questions

What’s the difference between AI enablement and AI capability building?

AI enablement usually means training, workshops, and documentation. AI capability building means your team can deploy, operate, govern, and extend production AI systems without the vendor. Enablement teaches concepts. Capability building transfers practitioner-level skill through paired production work.

How long does enterprise AI capability building take?

For a single use case, plan on 10-12 weeks of paired engagement to transfer meaningful capability. Capability across an enterprise AI portfolio (multiple use cases, multiple teams) is a multi-quarter program. Anyone promising “AI capability in 30 days” is selling something else, probably a workshop series.

Can capability building happen alongside production AI delivery?

Yes, and that’s the only way it works.

Capability building as a separate phase after delivery rarely sticks because the engineers who would benefit are usually moved to other projects by the time it starts. Documentation gets written, training sessions get scheduled, and the people who needed the capability are already gone. The capability has to transfer during the build, with the same engineers who will own the system afterward. That’s what makes the pairing model load-bearing: it isn’t a parallel workstream, it’s how the build happens. Senior practitioners write code and review pull requests alongside client engineers, and the capability transfer is the byproduct of that shared work.

What does enterprise AI capability building cost?

A capability-built engagement typically costs 15-25% more than a delivery-only engagement of the same scope, because senior practitioners spend more time pairing and less time pure-implementing. The cost is recovered the first time you’d otherwise have called the vendor back and didn’t need to. For most enterprises with 3+ AI use cases, the break-even arrives inside 12 months.

Do small teams need capability building?

More than enterprises do. A 200-person organization can absorb some vendor dependency. A 30-person product team can’t afford to wait for a vendor every time the model needs an update.

How do I know capability transfer worked?

Three signals, in order: (1) your team handles the first production incident without calling the vendor, (2) your team runs an evaluation cycle and ships a model update independently, and (3) six months after the engagement ends, your team has extended the system in ways the original engagement didn’t anticipate. If all three happen, the capability transferred.

What to do next

Enterprise AI capability building is the difference between an AI engagement that ends and an AI engagement that compounds. The deliverable matters, but the team that runs the deliverable matters more. The vendor question isn’t “can they ship it?” Most can. It’s “will my team be able to extend it after they leave?”

If you’re scoping an AI engagement and want to evaluate whether capability transfer is built into the proposal, the six questions in this piece are the place to start. If you want to see how Cabin structures capability transfer into a specific engagement, let’s talk about your use case. We’d rather show you the model on real work than describe it in the abstract.

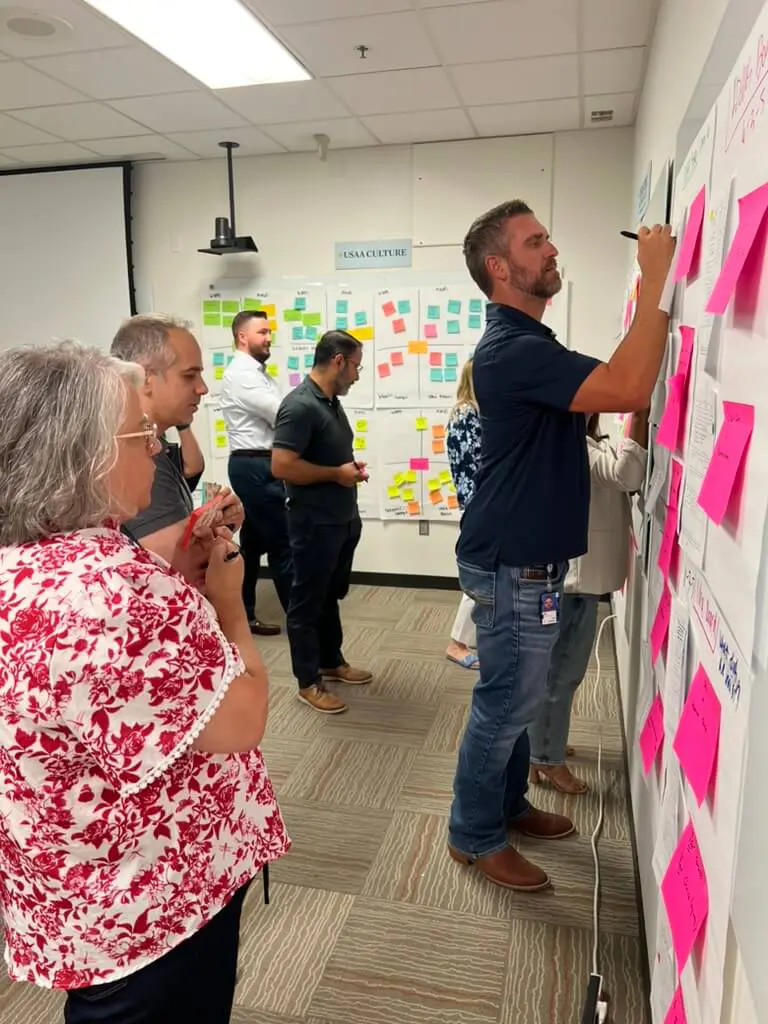

About Cabin: We’re an AI transformation consultancy that architects AI-native products and builds your team’s capability while we work, so the capability stays when we go. Notable clients include FICO, First Horizon, and Mastercard. The team you meet is the team that ships.